One of the largest AI conferences is NeurIPS, which happens annually in December. I spent some time browsing the presentations from the 2018 conference and found an interesting presentation by Edward W. Felten from Princeton University titled, “Machine Learning Meets Public Policy: What to Expect and How to Cope.”

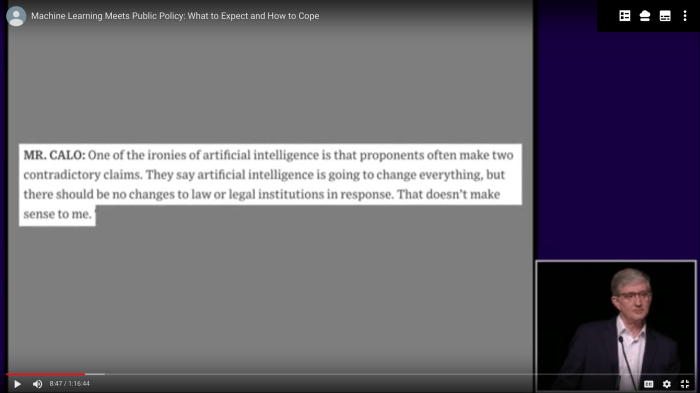

Felten kicks off his talk by highlighting the messaging that people in public policy are hearing about AI, and overwhelmingly, it is a warning to put regulations in place. People like Henry Kissinger and Elon Musk have already sounded the alarm to policy makers.

His thesis is that the best policies will come out of technical people partnering well with policy makers, with both sides trusting the others’ expertise. This comes from being engaged and constructive in the policy making process over time.

It was interesting to see push back on this thesis by some attendees. One counterpoint was that many industries have self-regulating bodies, such as FINRA, and that this could be an option for machine learning. However, Felten pushes back on this because self-regulating bodies work well when they have public accountability, and they are easily replaced when not accountable.