My #AIFebruary project is focused not just on learning about artificial intelligence, but also its applications in my field, HR and recruiting. With that in mind, I enjoyed these thoughts from the Forbes Human Resources Council from July. Members were asked what a future with AI might look like in our field. Some top answers:

Enhance efficiency. Stacey Browning, President of Paycor, advocates for humans and technology working together to scale a high-touch and responsive recruiting process.

Automation and a human touch don’t have to be mutually exclusive. Strategically combining them can deliver unrivaled results. In recruiting, automation’s infinitely scalable levels of efficiency mean that, regardless of the volume of candidates, each receives a timely correspondence. For candidates, being kept in the loop with a thoughtful and sincerely worded email is what makes the difference.

Stacey Browning

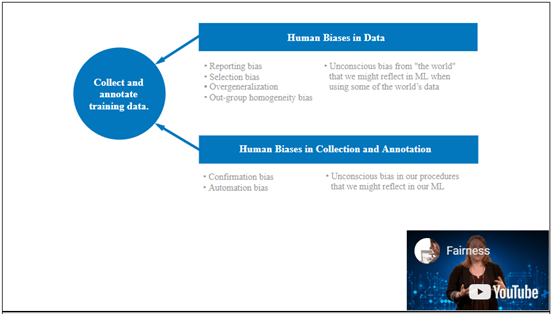

Reduce bias. Sherry Martin of the Denver Public School system highlights how assessments can be analyzed for bias in language and outcomes and adjusted over time to minimize adverse impact, ideally leading to a wider variety of job candidates.

Simpler sourcing. Sourcing is a popular aim for up-and-coming AI tools, and Heather Doshay at Rainforest QA talks about the impact of improving the ability to match candidates to jobs.

Sourcing is a time-intensive pain point for most talent professionals, and providing well-matched candidates to companies would significantly speed up the top of the recruiting funnel and increase the quality of hires.

Heather Doshay

Replace administrative tasks. This comment from John Feldmann at Insperity Jobs groups together the time-consuming but critical tasks that are part of nearly every recruiting process.

AI will be valuable in automating repetitive recruiting tasks such as sourcing resumes, scheduling interviews and providing feedback. This will allow recruiters and HR managers the opportunity to focus on strategic work that AI will most likely never replace, such as connecting with top talent, providing a more personalized interview experience and establishing training and mentoring programs.

John Feldmann

Stay compliant. Compliance is a critical concern in recruiting and Char Newell thinks that AI could help automate this aspect of the workload for recruiting organizations.